Artificial Intelligence (AI) - good? bad? That will be something each of us will wrestle with in the coming months and years. AI is already impacting the world of ‘communications’ in ways that some think are good and others think are bad.

What I’ll attempt to do this year is look at different ways people in various parts of the ‘communications industry’ are using AI, and what they believe the future holds for them and their business. I spent more than 40 years in Journalism and almost 15 years in Corporate Communications, so I’ll definitely focus on those areas. I’ll also look into other areas of Communications to see what we can discover.

Intelligence

Let’s begin by asking a basic question — what is ‘Intelligence?’

‘the ability to learn or understand or to deal with new or trying situations … the ability to apply knowledge to manipulate one’s environment or to think abstractly as measured by objective criteria (such as tests)’ Merriam-Webster

‘the ability to acquire and apply knowledge and skills’ Oxford Languages

Next, let’s look at the history of ‘Artificial Intelligence. Journalists may find sharing a little of the background of AI helpful when you do stories or series on the subject.

Artificial Intelligence is “the theory and development of computer systems able to perform tasks that normally require human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages.” (Oxford Languages) “Artificial intelligence leverages computers and machines to mimic the problem-solving and decision-making capabilities of the human mind.” (IBM)

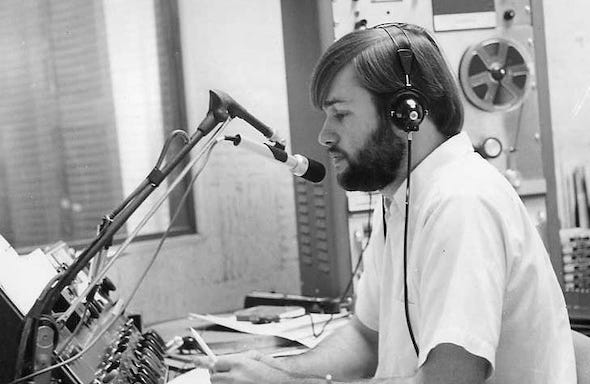

I began writing about AI in 1968. That was my first full year as a broadcast journalist. I remember visiting a local bank that had installed massive IBM mainframe computers to record customer financial records. They called it their “Data Processing Center.” One side of a giant room was filled with large computers that had magnetic tape reels recording and storing information. The other side of the room had bank employees adding information to computer punch cards and printing information on continuous form paper.

I researched the history of computers for news stories and it took me back to 1801 and Frenchman Joseph Marie Jacquard. I also came across the names of Charles Babbage, Herman Hollerith, Alan Turing, David Packard and Bill Hewlett, J.V. Atanasoff and Clifford Berry, John Mauchly and J. Presper Eckert, Grace Hopper, Thomas Johnson Watson Jr., John Backus, Jack Kilby, Robert Noyce and Douglas Engelbart. While all of those people played important roles in the development of computers, I found Alan Turing to be one of the most interesting.

A.M. Turing was a British mathematician who made a name for himself in the 1930s and 40s with his ideas for “automatic machines.” Turing is credited by some historians with shortening World War II by pioneering technology that decrypted secret Nazi communications. The machine he developed was known as the Bombe. Turing asked a very simple question to arrive at his development – Can machines think? That question and the answers he developed led to what became known as the Turing Machine and later as computers and artificial intelligence. You can read more about Turing’s ideas here.

I continued to cover the development of mainframe computers and other “learning machines” for the next 40+ years. That included operating systems, software, data memory and sharing, the ethernet, mini-computers, personal computers and mobile computing devices. From a news coverage perspective, I saw us go from manual typewriters and carrying dimes around all day to call the newsroom from pay phones to a completely digital newsroom with laptop computers and Blackberry phones for reporters in the field. That was a huge change from 1968 to 2009, the year I retired. Today’s reporters use iPads and iPhones in the field. Even more growth in the last fifteen years. What will the future hold?

AI in the Early 2000s

After completing a book about martial arts, I talked with my publisher about a book that would look at the history of humanity’s quest for immortality. I researched a variety of topics for three years in four primary categories and wrote A History of Man’s Quest for Immortality. The 784-page book was published in 2007. The original manuscript was 1200 pages, which was too long for the type of paperback we were going to print, so I edited out more than 400 pages to keep it under the maximum of 800 pages.

I say that to say there was a lot more information available about Artificial Intelligence in 2006 when I wrote the third category (section) of the book. It is titled “The Science of Immortality” and included what was then up-to-date information about Artificial Intelligence. Here are several quotes from the book that look at the history of AI as it stood 16 years ago.

Ray Kurzweil is well known for being an enthusiastic futurist. He has written many books and essays about the potential for human engineering that could extend human life by hundreds of years and eventually lead to unending physical life (immortality). His books deal with many of the subjects important to Immortality Science: artificial intelligence, robotics, nanotechnology, genetics, and transhumanism. Kurzweil’s first book was titled “The Age of Intelligent Machines (1990) and dealt with Artificial Intelligence (AI). He also wrote The 10% Solution for a Healthy Life (1994), The Age of Spiritual Machines: When Computers Exceed Human Intelligence (1999), Fantastic Voyage: Live Long Enough to Live Forever (2004) and The Singularity is Near: When Humans Transcend Biology (2005).

Kurzweil is concerned about living long enough to live forever. He believes humanity will walk the road to Immortality over what he calls ‘Three Bridges.’ The first bridge is staying healthy long enough to get to the second bridge … The second bridge is the biotechnical revolution that will allow humans to control their own genes by blocking genes that cause disease and promoting new genes that will slow and eventually stop the aging process. The third bridge is a combination of nanotechnology and artificial intelligence. Kurzweil believes the day is coming when scientists will be able to introduce millions of cell-sized robots (nanobots) into the human blood stream that will repair arteries, bones, muscles and brain cells. He also sees the day when people will be able to download improvements to their genetic coding from the Internet … He won the 1999 National Medal of TeXchnology Award and was inducted into the Inventors Hall of Fame in 2002. p 424

AI (artificial intelligence) has been defined as the “science and engineering of making intelligent machines” (John McCarthy, Stanford University). Computer programs that imitate human thinking is another way to explain the concept of AI. The programs can often solve problems much faster than humans with a lower error factor (often called “expert systems”). p 424

Work with AI technology exploded during the 1980s and 1990s as scientists worked together to advance their work. The American Association for Artificial Intelligence (AAAI) was started in 1979 by some of the leaders of early AI technology. They included John McCarthy, Allen Newell, Marvin Minsky and Edward Feigenbaum. The stated purpose of AAAI is it’s “a nonprofit scientific society devoted to advancing the scientific understanding of the mechanisms underlying thought and intelligent behavior and their embodiment in machines. AAAI also aims to increase public understanding of artificial intelligence, improve the teaching and training of AI practitioners, and provide guidance for research planners and funders concerning the importance and potential of current AI developments and future directions” (American Association for Artificial Intelligence, 2006). p 425

Bruce J. Klein is an immortalist. He co-founded the Immortality Institute in 2002. Klein is also President of Novamente LLC, a Maryland software company focusing on Artificial General Intelligence (AGI). He presents the goal of AGI as being ‘the creation of broad human-like and transhuman intelligence, rather than narrowly smart systems that can operate only as tools for human operators in well- defined domains.’ Klein believes ‘the best way to deal with the terror of death and oblivion is to live forever.’ He defines the immortalist’s philosophy as ‘humans only have one life and one chance to live. There are no alternative states to the current state other than oblivion. Thus, what we experience now and the life we have now is the only alternative … We only die – no afterlife, no second chances, no reincarnation. Thus, this only leaves us with one simple option – embrace life.” The stated mission of the Immortality Institute is to ‘conquer the blight of involuntary death.’ Klein believes in the ever-expanding lifespan. He points to increased lifespans during the past 200 years and quotes Dr. Ronald Klatz of the American Academy of Anti-Aging Medicine who believes the lifespan of baby boomers could reach 120 years of age and the generation after them could approach 150 years. p 436

In the April 7, 2000 edition of Science Magazine, John Harris wrote about the Intimations of Immortality. He pointed to new research (at that time) that showed aging and death might not be inevitable. He wrote about cloned human embryonic stem cells that could constantly regenerate organs and tissues and switching off genes that trigger aging. Harris believes life can be extended beyond the outer limits of current death (beyond 120 years) and that the moral and ethical aspects of it should be discussed now. He presented the real possibility that only a wealthier class of people might be able to afford the medical technology leading to immortality, presenting the world with the possibility of mortals and immortals living together, leading to new social pressures. p 437

Many of the concepts and technologies considered in this study for possible use in future space missions are elements of a diverse field of research known as ‘artificial intelligence’ or simply AI. The term has no universally accepted definition or list of component subdisciplines, but is commonly understood to refer to the study of thinking and perceiving as general information processing functions – the science of intelligence. Although, in the words of one researcher, ‘It is completely unimportant to the theory of AI who is doing the thinking, man or computer’ (Nilsson, 1974), the historical development of the field has followed largely an empirical and engineering approach. In the past few decades, computer systems have been programmed to prove theorems, diagnose diseases, assemble mechanical equipment using a robot hand, play games such as chess and backgammon, solve differential equations, analyze the structure of complex organic molecules from mass-spectrogram data, pilot vehicles across terrain of limited complexity, analyze electronic circuits, understand simple human speech and natural language text, and even write computer programs according to formal specifications – all of which are analogous to human mental activities usually said to require some measure of “intelligence.” If a general theory of intelligence eventually emerges from the AI field, it could help guide the design of intelligent machines as well as illuminate various aspects of rational behavior as it occurs in humans and other animals. Robert A. Freitas Jr., NASA Researcher, p 440

Next Newsletter

What about AI in 2024 — almost 20 years after I wrote about Artificial Intelligence in my book? Much has changed and advanced since then. That’s the topic for next week’s newsletter.

Comments and Questions Welcome

I hope these thoughts are helpful to you. Please share your comments and questions and I’ll respond as quickly as I can. If you like what we’re doing in this newsletter, please let your friends know about it so they can subscribe.

Newsletter Purpose

The purpose of this newsletter is to help people who work in the fields of journalism, media, and communications find ways to do their jobs that are personally fulfilling and helpful to others. I also want to help news consumers know how to find news sources they can trust.