Artificial Intelligence Is Changing Journalism

Is that good or bad for truth?

Did you know that thousands of news stories are written by machines each year? Do you know which ones? How is that affecting journalists now, and what does the future of journalism look like? I’ll share my answers to those questions in this article, but first a little background.

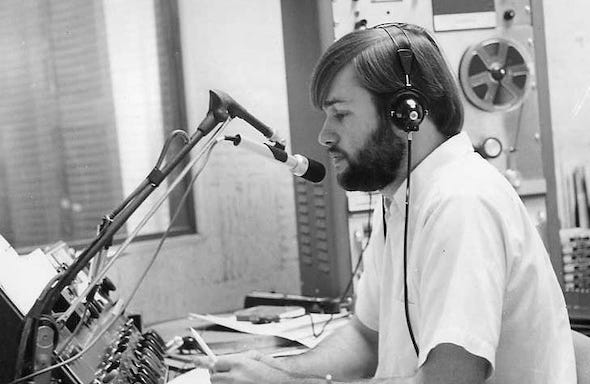

I began writing about Artificial Intelligence (AI) in 1968. That was my first full year as a broadcast journalist. I remember visiting a local bank that had installed massive IBM mainframe computers to record customer financial records. They called it their “Data Processing Center.” One side of a giant room was filled with large computers that had magnetic tape reels recording and storing information. The other side of the room had bank employees adding information to computer punch cards and printing information on continuous form paper.

I researched the history of computers for news stories and it took me back to 1801 and Frenchman Joseph Marie Jacquard. Jump ahead a century and we find Alan Turing. Turing was a British mathematician who made a name for himself in the 1930s and 40s with his ideas for “automatic machines.” Turing is credited by some historians with shortening World War II by pioneering technology that decrypted secret Nazi communications. The machine he developed was known as the Bombe. Turing asked a very simple question to arrive at his development – Can machines think? That question and the answers he developed led to what became known as the Turing Machine and later as computers and artificial intelligence.

I continued to cover the development of mainframe computers and other “learning machines” for the next 40+ years. That included operating systems, software, data memory and sharing, the ethernet, mini-computers, personal computers, and mobile computing devices. From a news coverage perspective I saw us go from manual typewriters and carrying dimes around all day to call the newsroom from pay phones to a completely digital newsroom with laptop computers and Blackberry phones for reporters in the field. That was a huge change from 1968 to 2009, the year I retired from television news. I wondered what the future would hold for journalism.

Conversational AI

The advancement of AI in the last several years has led to computer programs that can communicate conversationally. OpenAI is an Artificial Intelligence research and deployment company. Here’s how OpenAI explains the process:

The dialogue format makes it possible for ChatGPT to answer followup questions, admit its mistakes, challenge incorrect premises, and reject inappropriate requests. ChatGPT is a sibling model to InstructGPT, which is trained to follow an instruction in a prompt and provide a detailed response. OpenAI

We’ve trained language models that are much better at following user intentions than GPT-3 while also making them more truthful and less toxic, using techniques developed through our alignment research. These InstructGPT models, which are trained with humans in the loop, are now deployed as the default language models on our API. OpenAI

The outcome of AI research is quite remarkable. Machines can learn. ChatBots are conducting dialogs with humans, answering questions, following directions, researching and writing reports, doing homework, and writing books. Two years ago OpenAI’s GPT-3 platform had more than 300 apps covering everything from education to creativity, games, and productivity. What about today? Here’s a short list —

OpenAI software products can:

Create study notes after providing a topic.

Create questions for any type of interview.

Chat with humans with Chatbots.

Create any kind of recipe from the given list of ingredients.

Create an overall structure for the research topic essay.

Create ideas related to VR games and fitness.

Write meeting notes into a summary.

Write python docstring.

Turn any text into color.

Become a friend to chat with to kill boredom.

Convert JavaScript expressions into Python.

Create simple SQL queries.

Answers questions related to language models.

Create a spreadsheet for the given data.

Find and fix the bug in the code.

Create a product name.

Ad copy created through the product description.

Answers to factual data.

Explains code in simple language.

Translate the programming languages.

Convert the movie titles into emojis.

Spoken language translator.

Correct grammar in any language.

Answer to question based on existing knowledge. OpenAI Statistics

How did machines learn to do all of that so fast?

To create a reward model for reinforcement learning, we needed to collect comparison data, which consisted of two or more model responses ranked by quality. To collect this data, we took conversations that AI trainers had with the chatbot. We randomly selected a model-written message, sampled several alternative completions, and had AI trainers rank them. Using these reward models, we can fine-tune the model using Proximal Policy Optimization. We performed several iterations of this process. OpenAI

Journalism Impact

AI is already impacting social media and other communication platforms. As I mentioned at the beginning of this article, it’s also impacting journalism and has been for years.

You may remember that the Lost Angeles Times was able to publish a report about an earthquake three minutes after it happened in 2014. How did the LA Times do that nine years ago? Through a software robot called Quakebot. It wrote automated articles based on U.S. Geological Survey data. [Recent examples of Quakebot stories.]

News organizations use AI regularly to cover news, sports, and weather events. One notable example is The Washington Post's Heliograf system. Editors for The Guardian Newspaper gave an assignment to an AI bot in 2020 to write an essay from scratch. The writing assignment was for the GPT-3 bot to convince people that “robots come in peace.” It’s an interesting read for many reasons. It demonstrates an AI bot’s ability to receive an assignment, understand the assignment, research the assignment, write the assignment conversationally, and meet a deadline.

There’s no sign that this trend in journalism is going to slow down.

In the past year, you have most likely read a story that was written by a bot. Whether it’s a sports article, an earnings report or a story about who won the last congressional race in your district, you may not have known it but an emotionless artificial intelligence perhaps moved you to cheers, jeers or tears. By 2025, a bot could be writing 90% of all news, according to Narrative Science, whose software Quill turns data into stories. Wharton School of Business

AI researchers are improving the ability of machines every day to do more and do it faster. In addition to reporting news, sports, and weather, machines can research a subject and write a conversational-style report much faster than humans.

That’s concerning to me on many levels. It’s hard enough to trust that humans will be curious, skeptical, objective, and accurate. Can we trust what comes from an AI report? Even AI experts see limitations in these areas.

Despite making significant progress, our InstructGPT models are far from fully aligned or fully safe; they still generate toxic or biased outputs, make up facts, and generate sexual and violent content without explicit prompting. But the safety of a machine learning system depends not only on the behavior of the underlying models, but also on how these models are deployed. To support the safety of our API, we will continue to review potential applications before they go live, provide content filters for detecting unsafe completions, and monitor for misuse.

A byproduct of training our models to follow user instructions is that they may become more susceptible to misuse if instructed to produce unsafe outputs. Solving this requires our models to refuse certain instructions; doing this reliably is an important open research problem that we are excited to tackle.

Further, in many cases aligning to the average labeler preference may not be desirable. For example, when generating text that disproportionately affects a minority group, the preferences of that group should be weighted more heavily. Right now, InstructGPT is trained to follow instructions in English; thus, it is biased towards the cultural values of English-speaking people. We are conducting research into understanding the differences and disagreements between labelers’ preferences so we can condition our models on the values of more specific populations. More generally, aligning model outputs to the values of specific humans introduces difficult choices with societal implications, and ultimately we must establish responsible, inclusive processes for making these decisions. Open AI

Journalism’s Future?

So, what about the future of journalism? As I reported last year, younger people get the majority of their news from social media. The average time young people spend reading a news item on social media is about 20 seconds. That’s barely enough time to read the headline and the first couple of sentences before they move on to the next story, video, chat, text, or whatever they’re doing.

I also addressed this question in a previous article: Can We Trust News On Social Media? If ChatBots can converse at a human level on social media, how will people know whether the news they read is from a human or a chatbot? Some computer scientists are testing their own AI programs to detect AI written materials. One example is GPTZero.

What About Consumers of News?

I think the future of journalism for news consumers is going to be more challenging. It’s important to remember that journalism is not just for journalists — it’s for readers, listeners, and viewers. The “Free Press” exists for citizens. It exists for people who “consume” the news. News consumers need to know that they can trust what they read, what they hear, and what they see. The current trajectory of Artificial Intelligence is going to make that more difficult. Even news opinion and analysis are on the table for AI.

I’ll do my best to stay on top of this topic and let you know how AI continues to impact journalism in the future.

Next Newsletter

We will share another guest article by a former journalist in the next Real Journalism Newsletter. She had a great career in television news, then took those skills and built another great career in government communications.

Comments and Questions Welcome

I hope these thoughts are helpful to you as a journalist or news consumer. Please share your comments and questions and I’ll respond as quickly as I can. If you like what we’re doing in this newsletter, please let your friends know about it so they can subscribe.

Newsletter Purpose

The purpose of this newsletter is to help journalists understand how to do real journalism and the public know how they can find news they can trust on a daily basis. It’s a simple purpose, but complicated to accomplish. I’ll do my best to make it as clear as I can in future newsletters.